Best AI Crypto Coins to Watch in 2026 — The Current State of AI Cryptocurrency

Disclaimer

This article does not substitute professional financial or investment advice but serves as educational material on crypto and digital assets. When dealing with cryptocurrencies, remember that they are extremely volatile and thus a high-risk investment. Always stay informed and aware of those risks. Consider investing in cryptocurrencies only after careful consideration and analysis of your own research and at your own risk.

AI crypto coins combine machine-learning narratives with token economics, and 2026 is shaping up to be the year those two worlds collide in public view. AI tooling is getting more capable (and more widely used), while crypto keeps pushing on-chain automation, data markets, and decentralized infrastructure. Put them together and you get a simple promise: smarter systems coordinating value without a central operator. That promise is exciting—but also easy to oversell. “AI + crypto” is not one category, but a convoluted mix of compute networks, data sharing, agent tooling, and good old-fashioned hype.

AI Crypto: 2026 Overview

AI crypto coins combine (or at least claim or attempt to) technology from machine learning with blockchain rails. What for? To coordinate value, incentives, and data in a way that regular crypto tokens usually don’t. That mix is exactly why they matter in 2026: the market has matured past the “does it work?” phase and into the “can it scale, stay secure, and stay useful?” phase.

In simple terms, AI crypto is showing up wherever networks need smarter decision-making than a basic if/then script can offer. Sometimes that means allocating resources like compute, bandwidth, storage more efficiently. Sometimes it’s about automating on-chain actions with fewer human bottlenecks. And sometimes it’s just a better way to coordinate lots of moving parts without relying on a single company to call the shots. That last bit is the key part: when AI meets crypto, the “brains” and the “rules” can live in different places, which changes how products are built and who gets to benefit.

The future trend pushing this forward is not one single breakthrough, but a pile-up of practical improvements. Models are getting cheaper to run, developer tooling is improving, and the broader crypto ecosystem is more comfortable with complex on-chain systems than it was a few years ago. On the other hand, the same advancements raise the bar for what’s considered “real” innovation—because slapping “AI” on a token is not a business model.

Market dynamics in 2026 also make this intersection appear more urgent. Traders and long-term holders aren’t only asking “Will it pump?” anymore; they’re asking “What does it do, and can it keep doing it when usage spikes?” AI-focused tokens tend to attract both camps, which can amplify volatility, but also speed up adoption when the underlying utility is there.

Why AI Crypto Coins Matter in 2026

Utility

This category of coins powers specific on-chain actions that regular “pay-and-store” cryptocurrencies simply don’t target. Bitcoin is somewhat fine at being money; many AI-linked tokens are built to buy compute, route data, and coordinate agents (the stuff Artificial Intelligence needs to do real work). In 2026, that difference is the whole point.

Complex AI tasks are rarely one neat transaction. They’re workflows—requesting a model run, paying for inference, verifying outputs, compensating data providers, and sometimes splitting rewards among multiple contributors: developers, node operators, and even end users. AI crypto coins make those workflows programmable through smart contracts, which is why they show up in areas adjacent to decentralized finance (think automated payments, streaming fees, escrow, and usage-based billing).

FET (previously Fetch.ai, now Artificial Superintelligence Alliance) is a good mental model for the “agents” angle. Instead of a single app doing everything, autonomous agents can negotiate and execute tasks (like sourcing a service, booking capacity, or optimizing a route) and settle payments on-chain. SingularityNET, on the other hand, speaks to the “marketplace” angle—AI services that can be discovered and paid for without a centralized gatekeeper. Not every project nails execution, of course, but the utility thesis is clear: tokens aren’t just a ticker symbol; they are the “fuel” and permission layer for AI-native networks.

The practical implication for 2026 market trends is that utility becomes measurable. If a token is required to run inference, access an API-like service, or stake for network reliability, demand can follow real usage instead of pure speculation. That’s the bar AI crypto coins are trying to clear and the reason the category exists at all.

Network Effects

Like any other category, AI crypto coins gain value when more participants plug into the same rails, because AI and decentralization both reward scale—but in different ways. Artificial Intelligence improves with more data, more feedback loops, and more specialized services; decentralized networks improve with more nodes, more liquidity, and more integrations. Put them together, and you get compounding network effects.

On the AI side, a larger ecosystem means better coverage: more models, more tools, more agents, more niche services. On the decentralized side, interoperability and composability matter—projects can integrate into wallets, dApps, and decentralized finance protocols that already have users and capital. When an AI network’s token becomes the standard way to pay, stake, or validate work, each new integration makes the next one easier.

Real-world collaborations tend to follow the same pattern even when the details change: a model provider integrates with a decentralized compute layer, which integrates with a wallet, which integrates with an exchange, which makes onboarding easier, which increases usage. Building on that, shared standards (APIs, staking logic, and verification schemes) can improve efficiency and reduce fragmentation—two things AI ecosystems badly need in 2026.

That being said, network effects don’t guarantee quality. They do, however, amplify it when the underlying product works. That’s why the “best ai crypto coins” conversation is increasingly about ecosystems and integrations, not just whitepapers.

Token Economics

Tokenomics decides whether an AI crypto coin behaves like a useful utility token or a leaky bucket. In 2026, investors and users look beyond hype and into mechanics: how issuance works, who gets tokens and when, what holders can do with them (staking, paying fees, governance), and how value accrues back to the token rather than drifting to a separate app layer.

A few core mechanisms show up time and again:

- Token issuance and unlock schedules: Supply expansion (or large unlocks) can put pressure on prices even when the product is strong.

- Value accrual: Fees paid in-token, staking requirements, buy-and-burn, or revenue-sharing models can create persistent demand.

- Incentive design: Rewards for node operators, data providers, or developers can bootstrap growth, but can also create “mercenary” participation if incentives are too short-term.

Why does this matter? Because AI narratives can pump fast, and market trends can reverse just as quickly. Tokens with clearer issuance dynamics and stronger value-capture mechanisms tend to handle volatility better, especially when adoption is real. If the token is essential to pay for compute or access services, and supply shocks are limited, the economics can support a healthier long-term curve (less drama, more utility—everyone wins).

Adoption Metrics

Adoption metrics turn “AI + crypto” from a story into a scorecard. In 2026, you can’t only ask whether a token’s price moved; you have to ask whether people actually use the network and whether that usage is sticky.

The most common signals break down into a few points:

- Active users: Daily or monthly active addresses interacting with contracts tied to the AI service (payments, staking, agent registration).

- Transaction volume: Not just raw count, but meaningful transactions—service payments, inference requests, settlement flows, and recurring usage patterns.

- Industry penetration: Integrations with wallets, exchanges, and dApps; usage by developers building AI tools; presence in decentralized finance as collateral, staking asset, or payment token.

- Retention and repeat behavior: One-time spikes are easy to buy with incentives; repeat usage is harder (and more valuable).

Photo by Kanchanara on Unsplash

Here’s a helpful way to think about it: price is a headline, but adoption is the operating system. As parts of the Artificial Superintelligence Alliance (ASI), Fetch.ai and SingularityNET, for example, are often discussed not only for token performance but for whether developers and users treat them as default rails for agents or AI services. When you see consistent on-chain activity tied to real workflows—payments for services, staking for reliability, and integrations that reduce onboarding friction—that’s when an AI crypto coin starts to look less like a trade and more like infrastructure.

The important detail is to watch the quality of demand. If transaction volume is mostly exchange transfers, that’s liquidity, not necessarily adoption. If the volume is linked to service execution (i.e. actual use), you’re seeing the category’s real promise: decentralized coordination for Artificial Intelligence at scale.

Top AI Crypto Coins in 2026

Top-Tier Projects

Bittensor (TAO) coordinates machine learning work on-chain in a way that rewards useful intelligence. That sounds abstract, so here’s the practical angle: TAO is built around a marketplace where contributors (think model builders, data people, and infrastructure operators) compete to provide value, and the network allocates rewards based on performance. In 2026, that “incentives-first” design matters because Artificial Intelligence is not just about having models—it’s about keeping them improving, verifiable, and economically sustainable in public networks.

NEAR Protocol sits in a slightly different lane, but it keeps showing up in AI conversations for a simple reason: developers need fast, low-friction rails to deploy consumer-facing apps. AI apps tend to generate lots of small interactions (logins, micro-payments, identity checks, provenance receipts), and that’s where a high-throughput Layer 1 with a friendly developer experience can become the quiet winner. The important detail is that NEAR’s role is less “AI model coin” and more “AI application and data economy plumbing,” which can translate into resilient demand if AI-native consumer apps keep expanding through 2026.

Render focuses on the compute side, with a clear real-world hook: GPU power. As generative AI workloads increase (training, fine-tuning, inference, and the never-ending need to render and simulate) and the demand for computing power for more “classic” tasks is still present, markets tend to reward networks that can route jobs efficiently and pay providers reliably.

A simple way to think about these three is like building a modern AI product. Bittensor is the incentive engine for intelligence, NEAR Protocol is the app layer where users actually show up, and Render is the GPU muscle that keeps the lights on. If AI adoption keeps accelerating into 2026, these “top-tier” roles can remain sticky—because you can swap a model, but you can’t easily swap the underlying economic and infrastructure primitives once ecosystems form around them.

Emerging Projects

Source: ChainGPT

ChainGPT highlights why “emerging” matters in 2026: upside often lives where narratives are still forming, but so do the sharp edges. CoinGecko’s Artificial Intelligence category data flags a moderate dilution risk for ChainGPT based on its FDV figure (fully diluted valuation) compared to today’s circulating value. Translation in plain English: if more tokens are scheduled to unlock over time, the market may have to absorb extra supply, and price can feel that pressure even if the product is improving.

Granted, dilution is not automatically “bad,” but it changes the math for potential investors. If a project uses future token emissions to fund development, incentives, or ecosystem grants, that can accelerate adoption. However, if demand doesn’t grow at the same pace as supply, market capitalization can lag, and early momentum can fade. In 2026, when AI narratives move fast and attention rotates quickly, token schedule dynamics can be just as important as demos and partnerships.

AI Agents Projects

The ASI participants make the “AI agents” category feel less like sci-fi and more like software you can actually monetize. AI agents are essentially autonomous programs that can plan, make decisions, call tools, and transact—sometimes without a human clicking every button. In 2026, that matters because AI is moving from “chat with a model” to “delegate a task,” and delegation needs payments, identity, coordination, and, ideally, some guardrails.

ASI’s angle is coordination: agents that can negotiate, schedule, and optimize across networks (think travel booking, logistics routing, machine-to-machine commerce). The mechanism is the interesting part—agents don’t just compute, they interact. That interaction creates a need for predictable settlement (paying for services), and crypto rails can make those micro-transactions feasible without traditional banking friction. If agents become common in consumer apps, networks that support agent-to-agent markets can see demand that isn’t purely speculative.

The Virtuals Protocol, an agent-focused alternative to the ASI coalition, enables pretty much anyone to create, tokenize and monetize their own AI agents for gaming, entertainment, and virtual environments. The big 2026 trend here is composability: agents rarely do “one thing.” They chain capabilities—summarize, search, verify, generate, execute—and a marketplace model can turn those capabilities into modular building blocks. Mind that composability also spreads risk: if one model provider drops quality, an agent can route to another service without rewriting the entire product.

Projects that encourage verification, reputation, and constrained permissions will likely age better as regulators and users demand more accountability and safety from agent providers. Imagine giving an intern a company card: you want them to be proactive, but you also want spending limits and receipts. AI agents in crypto work best when the “company card” is programmable and auditable.

Decentralized Compute Projects

Akash Network represents the straightforward thesis behind decentralized compute: AI demand is outgrowing comfortable supply chains. Training and inference need compute, and centralized providers can be expensive, capacity-constrained, or simply unavailable in certain regions and scenarios. In 2026, decentralized compute becomes critical because AI workloads aren’t only for big labs anymore—they’re for startups, games, creators, and businesses that want predictable pricing and flexible capacity.

The “how” is basically a marketplace for compute resources. Providers contribute spare capacity; users rent it for workloads. Decentralized compute tries to turn idle GPUs/CPUs into the supply someone in need can use, priced by market dynamics. It’s not aiming to only be cheaper; it’s also about being more resilient and permissionless.

Use cases in 2026 get surprisingly practical: running inference for an AI chatbot, hosting embeddings for search, fine-tuning a model for a niche business, or spinning up short bursts of compute for media generation. And because these jobs can be elastic (more demand during product launches, less at night), market-based provisioning can fit better than long-term enterprise contracts. That elasticity is a big deal if you’re building AI products that have unpredictable traffic.

A grounded 2026 takeaway: decentralized compute is one of the few AI-crypto niches where “real usage” is measurable. Jobs executed, uptime, cost per hour, repeat customers—those are the receipts. If you’re evaluating crypto investments here, look for evidence that the network is doing actual work, not just launching announcements.

Data and Indexing Projects

The Graph (GRT) makes the “data indexing” category impossible to ignore because AI systems run on retrieval as much as generation. In 2026, most useful AI apps are heavy on RAG (retrieval-augmented generation), meaning they constantly fetch relevant context—wallet activity, smart contract events, user permissions, and historical states—before they respond or act. Data indexing is the unglamorous layer that makes that retrieval fast, structured, and reliable.

The Graph’s core job is to index blockchain data so applications can query it efficiently. Without indexing, pulling meaningful information from raw chain data can be slow, expensive, and developer-hostile. With indexing, developers can build features like “show me all swaps for this wallet,” “track protocol fees over time,” or “detect anomalies” without reinventing a custom data pipeline. And yes, AI loves this because agents and assistants need timely, clean inputs to make decent decisions.

In 2026, the market trend is toward more chains, more apps, and more data. That multiplies the value of standardized indexing because fragmentation is the enemy of usability. The important detail is that data indexing isn’t only for dashboards anymore—it’s for automated systems. AI agents that rebalance portfolios, monitor risk, or execute strategies need high-confidence data feeds, and indexing layers can become the default “memory” for on-chain activity.

If blockchains are books, indexing is the table of contents plus the search function. You can read every page to find one quote, but you won’t do it for long. Data projects win when they reduce friction so dramatically that everyone quietly relies on them.

For crypto investments, data and indexing projects tend to have a different risk profile than flashy model tokens. The upside comes from becoming a standard, and standards often compound. If AI keeps pushing more automation on-chain in 2026, dependable data indexing can be one of the most practical, revenue-adjacent foundations in the entire Artificial Intelligence crypto stack.

How to Evaluate AI Crypto Coins?

Category Fit

Naturally, the few highlights we provided barely scratch the surface of the category. AI crypto coins capture multiple narratives at once—Artificial Intelligence, DePIN, data marketplaces, compute networks, and sometimes plain old “AI hype”—so category fit is your first filter if you are looking to put some eggs in this particular basket. First of all, decide what you’re actually investing in: a protocol token that powers usage, an equity-like narrative token that rides market trends, or a utility chip for paying fees on smart contracts.

Start with a simple question: what does the token need to be for the project to function? Tokens like The Graph (indexing/querying) are usually easiest to place because the category is clear: infrastructure that developers consume. ASI leans into AI service marketplaces (more application-layer), while Ocean Protocol sits closer to data exchange rails. If your target category is “AI infrastructure,” a token that primarily markets a chatbot brand may be a mismatch.

Now bring market trends into the picture. In 2026, “AI” can mean model hosting, inference marketplaces, agent coordination, verifiable ML, and data provenance. Technological advancements matter here because a token that made sense in the “LLM API wrapper” era can look thin if open-weight models and on-device inference reduce demand for centralized compute. Your job is to ask whether the coin’s category thesis survives a changing AI stack.

Product and Usage

AI crypto coins earn their keep when the product creates real on-chain demand, not just a good demo video. A clean way to evaluate product strength is to map “user action → on-chain action → token necessity.” If the token never becomes the default payment/settlement unit inside the product, you may be looking at optional tokenomics rather than required utility.

For AI-specific scenarios, focus on compute, data, and model services. Ocean Protocol is the classic example of AI-adjacent utility: data access and monetization can be the bottleneck for model training, and the product narrative is easy to test—are datasets being consumed, and do payments route through the token or a smart-contract-enforced mechanism?

Here’s the key part: AI coins often promise AI model interoperability, and you should treat that like a technical claim, not a slogan. Interoperability can mean (a) shared identity and permissions for agents, (b) common formats for prompts/outputs, (c) cross-chain settlement for AI services, or (d) verifiable execution proofs. Ask what exactly is interoperable and what’s just “we’ll integrate later.” If the product depends on interoperability but lacks standards, SDK adoption, or developer tooling, the usage thesis is fragile.

A practical test is to imagine a regular work pipeline. A startup wants to query The Graph for on-chain data, purchase a dataset via Ocean Protocol, and run an agent workflow that calls a model marketplace like SingularityNET. Where does the token enter the flow—fees, staking for quality, reputation, or dispute resolution? If you can’t point to the moment the token is required, your investment is mostly narrative exposure, not product-driven demand.

Supply and Emissions

Tokenomics determines whether adoption helps the token or just helps the app. Supply and emissions are especially sensitive for AI projects because computational demands create ongoing costs: compute providers want predictable payouts, and users want predictable pricing. If emissions are too aggressive, you get short-term growth with long-term value leakage. If emissions are too tight, you might starve the network and push activity offchain.

Start by unpacking the supply schedule: max supply, current circulating supply, vesting cliffs, and who gets what (team, investors, ecosystem, mining/rewards). Then connect it to AI economics. A network paying for GPU inference via token rewards needs to match emissions to real demand; otherwise, it’s basically subsidizing usage with inflation. That can work early, but only if the subsidy tapers as fees take over.

A helpful mental model is “AI tokens are fuel and payroll.” Fuel (fees) scales with usage. Payroll (emissions) scales with incentives and supply schedules. Look for mechanisms that convert usage into buy-and-burn, fee redistribution, or locked staking demand, ideally enforced by smart contracts rather than promises. Also ask: are emissions tied to measurable work (compute delivered, queries served, data provided), or are they blanket rewards for “participation”? AI networks can get spammy quickly, so emissions should be conditional—proof-of-work (in the generic sense), reputation, slashing, or verification.

Incentive Design

This is the difference between a useful AI network and a token farm with a fancy UI. The “what” is simple: rewards should attract the right contributors (compute, data, developers, evaluators), and penalties should discourage low-quality output. The “how” is where most projects get messy.

AI coins typically need multi-sided incentives. Compute providers need compensation and uptime bonuses. Data providers need licensing and revenue shares. Developers need grants or fee rebates. Users need predictable pricing. The “tokenomics puzzle” is to balance these without printing tokens endlessly. A strong design usually mixes: (1) fee-based demand, (2) staking for quality/security, and (3) rewards that taper as the marketplace matures.

Quality evaluation is not a uniquely AI-related problem but this category calls for a certain algorithm. You should look for incentive hooks around benchmarking, human/agent evaluators, or cryptographic verification where possible. If the project claims “verifiable inference,” ask what is actually verifiable today and what’s roadmap-only. A “trust me, it’s good” marketplace is fragile.

Also check whether the incentives create value retention. Staking lockups, fee discounts for paying in-token, and governance rights can all increase holding demand. But beware of “discount only” utility—if users can pay in stablecoins with negligible friction, the token may not capture much value.

Finally, keep regulatory compliance in mind. Incentives that look like guaranteed yields, especially when marketed aggressively—so in other words, securities by definition—can become a liability. Good projects design rewards as compensation for useful work, not as passive promises.

Decentralization

Decentralization in AI crypto coins is not a purity contest—it’s a risk-management tool. Claims of democratizing and accessibility are free but identifying which layer is actually decentralized is a necessary part of evaluation: it can be governance, compute supply, data access, model hosting, or settlement. Many AI projects decentralize the token while keeping the AI service centralized, which can be fine for early product-market fit but changes the risk profile from the word “go”.

Security is the most practical reason to care. If critical infrastructure runs through a single coordinator, a single API key, or a small set of nodes, you have single points of failure. For something like The Graph, decentralization has a tangible meaning: independent indexers and delegators provide redundancy, and incentives align around serving correct data. That’s a clearer security story than “we have a token, therefore we’re decentralized.”

Governance is the second reason. If a small team can change fees, emissions, or listing rules unilaterally, the token’s value is exposed to managerial risk. With AI networks, that risk can be bigger because policies affect content moderation, model access, and data licensing—topics that can quickly collide with regulation and platform pressures.

You don’t need perfection on day one, but you do need an honest path. If decentralization is always “coming next quarter,” treat it like a roadmap promise and price it as such.

And yes, decentralization also affects regulatory compliance. Projects with centralized control may be easier to regulate (and to pressure). More distributed systems can be more resilient, but they must still handle real-world constraints like sanctions screening and data rights.

Partnerships and Integrations

Partnerships matter in the AI crypto niche just like everywhere else because distribution and credibility are hard to win. A project can build the cleanest protocol in the world, but if it doesn’t integrate with wallets, data providers, compute platforms, and developer tooling, adoption stays theoretical.

The trick is to grade partnerships by integration depth. A logo on a slide deck is not the same as a live integration where the token is used for fees, a joint product workflow, or an SDK that developers actually ship with.

For AI-specific technologies, look for integrations that unlock interoperability: shared agent frameworks, data pipelines, model registries, and on-chain identity/permissions. If a coin claims AI model interoperability, partnerships should show up as concrete connectors—think “you can call this model marketplace from this agent framework” rather than “we’re exploring synergies.”

Also ask the uncomfortable question: does the partnership reduce regulatory compliance risk or increase it? A partnership with a compliance-forward custodian, auditor, or enterprise data provider can make the project easier to use in regulated settings. On the other hand, partnerships that imply access to copyrighted or sensitive datasets can create headline risk that spooks liquidity.

Finally, measure follow-through. If a project announces integrations frequently but the product UX remains unchanged, that’s a signal. Real integrations leave footprints: docs, releases, and users.

Roadmap and Delivery

Polygon 2.0 Roadmap as an example

Speaking of which, roadmaps in general are cheap; delivery is the asset. Evaluating AI crypto coins here means comparing promised AI advancements with what’s actually shipped—especially around interoperability, verifiable inference, data provenance, and real usage metrics.

Start with the project’s historical cadence. How often do they ship releases? Are milestones met close to the dates, or perpetually “in progress”? You don’t need perfection, but you do want a pattern that suggests competent execution. AI moves fast, and missed cycles can make a product obsolete even if the blockchain side is solid.

Now translate roadmap items into testable deliverables. “Agent framework v2” is vague. “New smart contracts enabling fee routing and staking with audited code” is concrete. “Model interoperability” should come with SDKs, standards, and working integrations—not just a new whitepaper diagram.

Also check whether the roadmap aligns with tokenomics. If the next year focuses on user growth, are incentives budgeted responsibly, or will emissions spike? If the roadmap promises enterprise adoption, is regulatory compliance (licensing, privacy, auditability) part of the plan or an afterthought?

Finally, watch for dependency risk. Many AI roadmaps rely on external breakthroughs (cheaper compute, better proofs, new standards). Good teams acknowledge dependencies and build incremental value anyway, rather than betting everything on one moonshot.

Liquidity and Market Structure

Liquidity is your reality check, because even the best AI thesis can turn into a bad trade if market structure is fragile. Start with the basics: where is the token traded, what’s the spread, what’s daily volume, and how concentrated is liquidity in one venue. Thin liquidity amplifies volatility, and AI coins can already be momentum-driven due to market trends.

For AI coins, also consider “utility-driven liquidity.” If the token is used inside smart contracts for fees/staking, organic demand can create a floor. If the token is mostly a governance badge, liquidity depends more on sentiment.

Governance

Last but not least, governance keeps AI crypto coins adaptable, which matters because the Artificial Intelligence technology changes faster than most on-chain protocols. The goal is making sure the rules that affect value—fees, emissions, treasury use, listings, and upgrades—can evolve without turning into chaos (or a startup with a token).

First, identify the governance model: token-weighted voting, delegated governance, multisig-controlled with a transition plan, or hybrid. Then evaluate who actually has power today. If voting is technically open but token distribution is concentrated, governance may be decentralized in branding only. If a foundation can veto proposals, that’s not always bad—especially for security—but it should be explicit.

AI-specific governance questions tend to be spicier. How are models listed or delisted? How are disputes handled when outputs are low-quality or harmful? How are data rights enforced? These are not abstract debates; they’re product and legal risk. Governance must balance openness with regulatory compliance, or the network can become unusable for serious users.

Look for governance that is constrained by smart contracts where appropriate (timelocks, caps on parameter changes, transparent treasury flows). A timelock on upgrades gives users time to react—simple, boring, effective. Also examine whether governance changes can break tokenomics: can voters crank emissions to buy short-term growth? If yes, are there guardrails?

Finally, check participation incentives. Delegation, staking-linked voting, and clear proposal processes increase the odds that governance is functional. If governance is technically “on,” but nobody votes and a few insiders decide everything, sustainability is weaker than it looks—especially in fast-moving AI markets.

Catalysts and Market Conditions to Trigger an AI Coin Breakout

Compute Supply Shocks

Compute supply shocks change AI coin economics by shifting the real-world cost and availability of GPUs (and other accelerators) that power Artificial Intelligence workloads. When the price of compute moves, everything downstream moves with it: node profitability, inference pricing, developer demand, and ultimately market trends around which networks look “cheap” or “expensive” relative to the value they deliver.

Here’s the thing: AI coins that rely on decentralized GPU supply tend to react to both scarcity and abundance, just in different ways. A global chip shortage (or even a logistics bottleneck) can make centralized cloud compute more expensive and harder to access, which nudges teams toward decentralized alternatives—if those networks can still source hardware. On the other hand, a wave of next-gen GPU technology can create a different kind of shock: older cards get pushed into secondary markets, and suddenly a lot of “good enough” compute shows up at lower cost (which can expand network capacity fast).

Instead of costs directly, supply shocks change behavior. When GPUs are scarce, providers may hoard capacity, lock it behind longer commitments, or demand higher rewards, which can tighten available compute and cause service quality swings (latency, uptime, throughput). When GPUs flood the market, providers compete harder on pricing, networks can onboard more nodes, and AI coins tied to usage fees can see higher volumes if demand follows.

AI Model Release Cycles

New model releases can act like “attention earthquakes” that redirect capital, developer time, and user demand across the entire Artificial Intelligence stack. A next-gen model launch from a major company tends to do two things at once: it resets expectations of what users now consider “normal” performance and it reshapes the cost curve of what it takes to run that performance in production. AI coins that sit anywhere near inference, data, fine-tuning, or compute marketplaces can benefit—or get exposed.

So, model launches often create a predictable scramble: developers rush to build wrappers, agents, plug-ins, and vertical apps, and they need infrastructure yesterday. If a decentralized network can offer competitive latency, stable uptime, and clear pricing, the demand spike can translate into higher on-chain activity and stronger liquidity as traders anticipate growth. If the network can’t meet service expectations, the market reaction is usually swift.

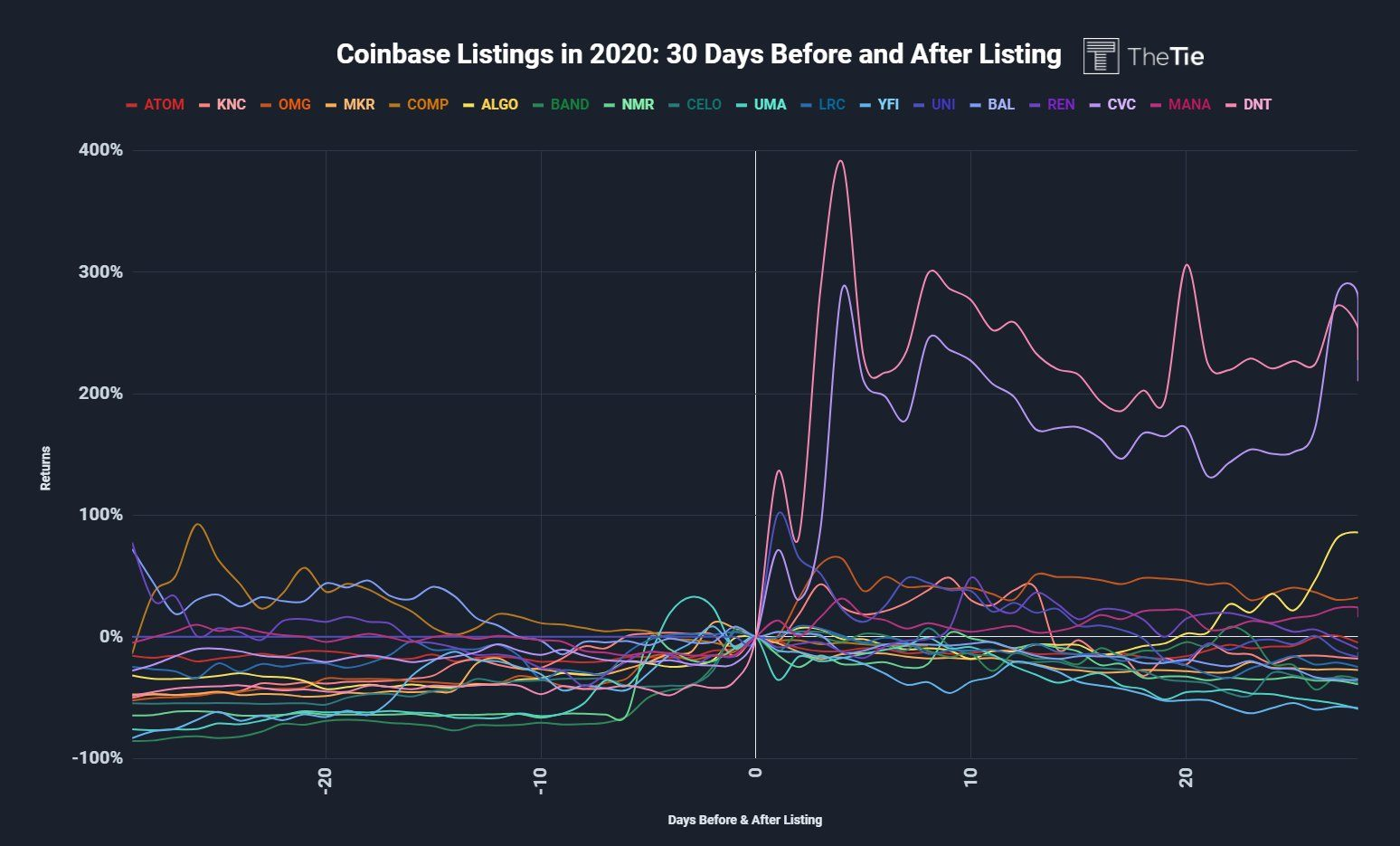

Exchange Listings

Exchange listings increase accessibility and liquidity, which is why they’re such a classic breakout catalyst for AI coins, especially smaller cap ones. When a token gets listed on a major platform, more people can buy it with fewer steps, spreads often tighten, and volume typically rises—sometimes dramatically—because the asset is now plugged into the exchange’s distribution and trading rails.

Source: FX Street

Listings are not just “more buyers.” They also change the microstructure: more market makers step in, derivatives may appear later, and the token becomes easier to hedge or arbitrage. That can reduce friction for larger traders, which matters because thin liquidity is one of the biggest reasons smaller AI coins stay range-bound even when their underlying product is improving.

For AI coins specifically, listings can be extra potent when they coincide with visible adoption signals like rising network fees, more active nodes, or new developer integrations. If the listing makes the token easy to acquire right when users need it to pay for inference/compute/data access, you get a cleaner demand loop. If the token is mostly a governance chip with little immediate utility, the listing may still pump—though it’d be more vulnerable to a sharp reversal.

Regulatory Developments

Regulatory developments can unlock adoption just as easily as it can freeze it because AI coins sit at the intersection of crypto rules and Artificial Intelligence policy. Jurisdiction-by-jurisdiction clarity affects where exchanges list, where funds allocate, and whether teams feel safe building consumer-facing products that use tokens for payments, access, or governance.

When it comes to cutting edge technology, regulation arrives as a patchwork: one region tightens marketing and token distribution rules, another clarifies custody and exchange requirements, and another focuses on data handling and model accountability. For AI coin projects, that means two parallel compliance tracks: crypto regulatory compliance (issuance, trading, custody, disclosures) and AI-related obligations (data provenance, safety, transparency—especially for models used in sensitive contexts).

Macro Liquidity

Zooming out completely, macroeconomic factors influence AI coin breakouts because speculative assets amplify whatever the global risk environment is already doing. When capital is cheap and investor sentiment is positive, money flows further out on the risk curve—first into majors, then into narratives like Artificial Intelligence, and finally into smaller caps where percentage moves can be whiplash-inducing.

Interest rates and broad financial conditions set the backdrop for how aggressively traders allocate, how long they hold, and how quickly dips get bought. In looser conditions, liquidity tends to find stories with momentum, and AI is one of the stickiest stories of the decade. Moreover, macro also changes the psychology of timing. In a risk-on environment, traders pre-position for catalysts (model releases, listings, incentive seasons) because they expect follow-through.

A practical 2026 takeaway is to watch the combo, not the headline. When macro liquidity is improving and AI coin ecosystems show measurable usage gains (transactions, fees, active providers), breakouts tend to be more durable. When liquidity is deteriorating, you can still get sharp pumps—but they’re more likely to be event-driven spikes that fade unless fundamentals are strong enough to overpower the macro gravity.

Key Considerations, Risks, and Legality

Regulatory Status and Compliance

AI crypto coins face regulatory compliance pressure from multiple angles in 2026, because they touch trading, data, and sometimes “AI services” all at once. On top of that, compliance is not a global switch—it’s effectively operating jurisdiction-by-jurisdiction, even if the token trades 24/7 everywhere.

Photo by Bram Naus on Unsplash

In the United States, the usual pinch points are federal securities laws, commodities oversight, and money-transmission rules. If a token looks like an investment contract, teams and exchanges can end up dealing with securities-style disclosures, restrictions on who can buy, and enforcement risk. Add “AI” and you also run into privacy and consumer protection expectations when projects handle user prompts, training data, or inference logs.

In the European Union, compliance tends to feel more “packaged”: licensing, whitepaper-style disclosures, marketing rules, and custody expectations are typically more standardized across member states once the framework applies. EU-style regimes often care a lot about who provides the service (issuer, broker, custodian), not just what the token is. If your AI token comes with a wallet, a hosted dashboard, or a fiat on-ramp, you can accidentally step into regulated territory without realizing it.

Across Asia, the approach is famously non-uniform. Some hubs emphasize licensing for exchanges and custodians, others focus on strict retail access rules, and some take a more restrictive stance overall. Practically, teams end up geofencing features, limiting airdrops, or splitting products: one version for “allowed” markets, another for everyone else. If you are investing, the takeaway is simple: a token’s legal risk is not only about its code—distribution, marketing, and who can access the app can change the compliance story overnight.

Market Volatility and Liquidity Risk

AI crypto coins experience extreme volatility because narratives move faster than fundamentals, and AI headlines have a jet engine strapped to them. Liquidity is the other half of the story: you can survive price swings if you can exit, but you cannot exit if the market is thin.

In practice, AI-token charts often show the classic pattern: rapid repricing around product demos, exchange listings, or partner announcements—followed by equally sharp drawdowns when expectations cool. Even without quoting a single percentage, you have likely seen the “straight up, then stair-step down” structure that happens when leveraged traders and short-term momentum dominate. The risk isn’t just that the price drops; it’s that slippage and spread widening can make a bad day worse.

Here’s how the mechanics typically bite:

- Shallow order books: A modest market order moves price more than you expect.

- Concentrated holders: A few wallets can overwhelm daily volume.

- Incentive-driven liquidity: Liquidity mining looks stable until rewards end.

- Cross-venue fragmentation: Price differs across exchanges, and arbitrage can fail during stress.

Want a quick check before you buy? Look at how the token trades during high-volume news days: does the spread stay reasonable, or does it gap out? Also, check whether liquidity is real across multiple venues or mostly sitting in one pool. If “liquidity” is just one incentivized pair, it can vanish the moment incentives change.

Smart Contract, Bridge, and Custody Risk

Smart contracts create unstoppable execution, which is great until the code has a bug that executes unstoppable loss. In 2026, AI crypto projects often rely on multiple smart contracts (staking, rewards, data markets), plus cross-chain bridges and custody layers that multiply the attack surface.

Source: CryptoRank

The technical vulnerabilities tend to repeat across ecosystems:

- Access control failures: Admin keys, upgrade rights, or role permissions misconfigured.

- Oracle and pricing issues: External data feeds manipulated or delayed.

- Reentrancy and logic bugs: Classic smart contract pitfalls that still show up.

- Bridge message validation errors: The “one weird trick” attackers love, because bridges connect big liquidity to thin security.

Custody is the quieter risk. Many AI projects encourage users to deposit tokens into vaults, restake systems, or hosted “agent” dashboards. If you hand assets to a third-party contract or custodian, their operational security, key management, and incident response quality become your problem.

Newer standards and best practices help, but they are not magic. Audits, formal verification, bug bounties, multisig controls, time-locked upgrades, and on-chain monitoring are all positive signals—yet exploits still happen when incentives are high. Remember, “audited” is not the same as “safe,” especially when contracts are upgradeable and the upgrade path is centralized.

A good start for a mitigation mindset: treat every bridge hop as additional risk, minimize approvals, use hardware-based self-custody when possible, and assume that any contract you interact with can fail.

Protocol and Governance Risk

Protocol governance risk shows up when “decentralized decision-making” becomes slow, captured, or manipulable. AI-focused protocols are especially exposed because governance often controls sensitive levers: emissions, compute allocation, data curation rules, and even which models are considered “official.”

The first issue is low participation. Many DAOs technically allow voting, but only a small fraction of holders show up. That creates a power vacuum where whales, delegates, or insiders can steer outcomes with limited pushback. Moreover, token-weighted voting can turn governance into “pay-to-win,” even when the community vibe is friendly.

The second issue is governance complexity. AI protocols tend to ship fast: model upgrades, dataset policies, incentive tweaks. Frequent proposals increase the chance of voter fatigue and rushed approvals—exactly when a malicious proposal, a hidden parameter, or a compromised delegate can slip through.

The third issue is the upgrade paradox. Users want safety fixes and new features, but every upgrade mechanism introduces a control point. If a small multisig can upgrade contracts instantly, that’s flexible, but it is also a centralization risk and a potential single point of failure.

Mitigations exist and they are worth looking for: quorum thresholds that make sense, transparent delegation, timelocks on critical changes, independent security councils with limited scope, and clear emergency procedures. If governance documentation is vague—or if the forum is dead—take that as a signal.

AI-Specific Risks (Data Rights, Model Integrity, Compute Centralization)

AI crypto coins inherit crypto risks and add a new layer: data rights, model integrity, and compute centralization. If the token’s value depends on an AI system behaving credibly, then the AI pipeline becomes part of your risk model, not just the blockchain.

Data rights are the first flashpoint. AI projects may rely on scraped datasets, user-submitted data, or third-party content. If the rights to use that data are disputed, the project can face takedowns, restricted access, or forced changes to how it trains and serves models. Even if the chain is unstoppable, the dataset often is not.

Model integrity is the second issue. You can verify a transaction on-chain, but verifying that an AI model is the one you think it is (and that it hasn’t been backdoored) is harder. Teams may publish model hashes, inference proofs, or reproducible build pipelines, but those practices are not universal. And if the model can be swapped quietly, token incentives can be gamed—think biased outputs, manipulated rankings, or “agent” behavior tuned to favor certain counterparties.

Compute centralization is the third risk, and the most practical one. Many “decentralized AI” systems still depend on a small set of GPU providers, one cloud region, or a handful of node operators with the real hardware. When compute concentrates, censorship pressure rises, outages become systemic, and fees can spike. If you see a protocol claiming decentralization but requiring permissioned compute whitelists, treat it like a centralized dependency with extra steps.

If you are investing, ask: who owns the data pipeline, who can update the model, and who controls the GPUs? The token price often answers to those three parties.

Counterparty and Exchange Risk

Exchanges and counterparties create convenience, and convenience creates single points of failure. In 2026, AI tokens are frequently traded on centralized exchanges, used in lending markets, or wrapped into yield products—all of which introduce counterparty exposure that has nothing to do with smart contracts.

Exchange risk usually comes in a few flavors:

- Withdrawals paused: Congestion, “maintenance,” compliance reviews, or solvency stress.

- Delistings: Classification concerns, low volume, or policy changes.

- Custody concentration: Your assets are an IOU until you withdraw.

- Operational failures: API outages, order mismatches, or liquidation engine issues during volatility.

Counterparty risk also shows up in “off-chain” parts of AI ecosystems: hosted inference credits, centralized agent marketplaces, or enterprise partnerships where token utility depends on a company’s continued support. If that company pivots, your “utility” can evaporate while the token still trades like nothing happened.

Mitigation is not complicated, just slightly annoying: self-custody for long-term holdings, diversified venues across reputable exchange platforms, avoiding leaving large balances on any platform you don’t fully trust, and being cautious with leverage. If a platform offers high yields on an AI token, ask what the yield is paying you for: market making, lending, rehypothecation, or pure subsidy.

Tax Reporting and Recordkeeping Risks

Tax reporting becomes harder with AI crypto coins because activity is multi-layered: swaps, staking, bridging, airdrops, and “usage” payments for AI services can all create reportable events. The safest mindset is to assume that if the transaction changes your economic position, your tax authority may care.

Recordkeeping is the make-or-break piece. Wallet-to-wallet transfers may be “non-taxable” in many places, but you still need to trail the cost basis to prove it. Bridging is notorious here because the asset may look “different” across chains (wrapped versions, synthetic receipts), which can confuse both humans and software.

Future Trends in AI Coins

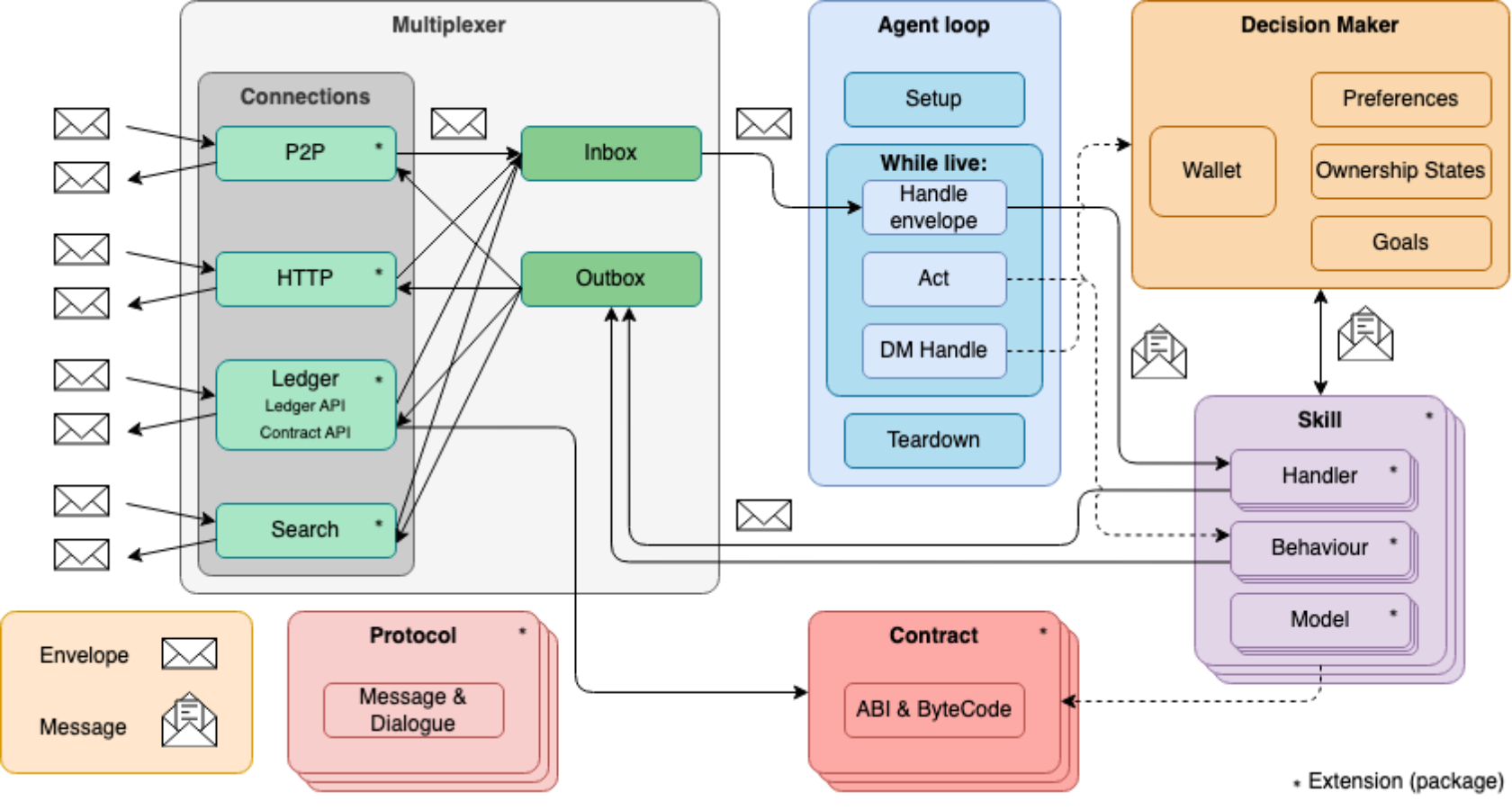

AI Agents

Main components of an AEA. Source: Olas Stack Developer Documentation

So-called Autonomous Economic Agents (AEAs) automate on-chain decisions across blockchain apps. In plain English, an AEA is an AI “worker” that can read data, choose an action, and execute it with smart contracts (often with a wallet it controls, plus guardrails). That’s the big shift for 2026: agents won’t just suggest trades, votes, or rebalances — they’ll do them, under rules you can verify.

How is that different from things like AI trading bots? AEAs are moving from single-task scripts to multi-step workflows: discover an opportunity, verify constraints, route liquidity, sign transactions, and report outcomes. In decentralized finance, that looks like an agent that continuously monitors borrowing rates, moves collateral when thresholds hit, and hedges exposure — without you hovering over a dashboard at 2 a.m.

By 2026, expect more agent “teams” where specialized models cooperate: one agent for market scanning, another for risk checks, another for execution. This is where AI crypto projects are heading conceptually — standardized agent identities, permission scopes, and auditable policy files, so users can say: “You may rebalance monthly, but you may not bridge funds, and you may not touch my cold wallet.” As a result, agent marketplaces should mature too, with reputation tied to transparent performance and slashing-style penalties when agents violate constraints.

The catch? If an agent has broad permissions, a bad model update, a poisoned prompt, or a clever exploit in a smart contract can turn “helpful automation” into “very expensive lesson.” The winner won’t be the most creative agent — it will be the safest agent with the best controls.

Decentralized Compute

Decentralized compute distributes AI workloads across many providers instead of one cloud. For AI crypto, the pressure point is simple: GPUs are scarce, expensive, and increasingly mission-critical, so marketplaces that can aggregate unused capacity are positioned to matter more in 2026 than they did in 2024.

As already briefly mentioned, AI demand is not just training anymore. Inference (running models repeatedly) eats compute too, and it shows up everywhere — wallets, trading terminals, DeFi dashboards, gaming, on-chain analytics, and support agents. Centralized providers can scale, sure, but they also create single points of failure, facilitate price swings, and impose geographic restrictions. A decentralized compute layer offers an alternative: more suppliers, more pricing dynamics, and (in theory) fewer bottlenecks.

Projects like Render are often discussed as “GPU networks,” but the 2026 story is bigger than renting graphics card power. Expect more infrastructure around scheduling, verification, and quality-of-service: job routing that prefers certain hardware, reputation for providers, and cryptographic proofs (or at least strong attestations) that the work was done correctly. On the other hand, GPU marketplaces still have to solve messy real-world problems: uptime, bandwidth, driver versions, and predictable performance.

On-Chain AI Inference

On-chain AI inference runs parts of an AI model directly on blockchain. That sounds wild because blockchains are slow and expensive compared to servers — and that’s exactly why it’s interesting: the goal isn’t “run GPT on Ethereum,” it’s “make small, critical decisions verifiable inside smart contracts.”

The mechanism usually looks like this: a compact model (or a distilled component) produces a result on-chain, or a proof is posted on-chain that off-chain inference followed the rules. Either way, the blockchain becomes the referee. For decentralized applications, that unlocks deterministic logic: the same input produces the same output, and anyone can check it. That’s a big deal for DeFi automation, credit scoring, liquidation logic, and parameter tuning, where trust assumptions are currently squishy.

Benefits come with obvious friction. On-chain computation is constrained by gas costs, limited throughput, and the need for deterministic execution (no “call an API and hope it behaves”). So by 2026, the practical pattern will likely be hybrid: keep heavy inference off-chain, but anchor outcomes on-chain through commitments, proofs, or challenge systems.

Data Provenance

In turn, data provenance tracks where AI training and inference data came from and whether it was altered. In AI crypto, provenance becomes crucial because “garbage in, garbage out” turns into “garbage in, funds out.” If a model is making decisions that touch wallets and smart contracts, the implications should be clear.

The process is a mix of cryptography and process. Datasets can be fingerprinted with hashes, stored with tamper-evident logs, and referenced on blockchain so anyone can verify integrity over time. Preventing fake data is just a byproduct; the goal is accountability: who contributed the data, under what license, and with what privacy guarantees. That matters for compliance-minded products and for user trust.

By 2026, expect more “data pipelines” built like financial pipelines: ingestion rules, validation steps, and audit trails that are as formal as code reviews. Some systems will use attestations (hardware or signer-based), others will rely on decentralized storage with content addressing, and many will combine both. Still, provenance has a tricky enemy: incentive attacks. If contributors are rewarded, some will try to game the system with low-quality or adversarial data.

Cross-Chain Interoperability

Cross-chain interoperability enables AI crypto apps to move value and instructions across multiple networks. For 2026, this is not optional, because AI agents and compute markets won’t live on one chain. Users hold assets on different blockchains, DeFi liquidity is fragmented, and the best execution path often crosses ecosystems.

Interoperability comes in layers. There’s asset transfer (bridging tokens), message passing (sending instructions), and state awareness (knowing what happened on another chain). The key part is message passing, because AI agents need more than “move coin A to chain B.” They need “if condition X is true on chain 1, execute strategy Y on chain 2, then settle on chain 3.” That’s where interoperability protocols and standardized cross-chain messaging become the backbone for multi-chain automation.

Of course, bridges have historically been a high-risk zone. So the 2026 trend is toward stronger security models: better validation, more redundancy, smaller trust assumptions, and clearer failure modes. In practice, that could mean apps limiting agent permissions across chains (no blind bridging), using allowlists for destinations, and requiring multi-step confirmations for large transfers.

For example, an AEA managing a portfolio can rebalance across chains where yields are best, while keeping its accounting and rules enforced by smart contracts. The implication for users is smoother “one interface, many networks” experiences — and for the industry, a push toward interoperability standards that make AI-driven, cross-chain DeFi feel less like a juggling act and more like a product.

Conclusion

Artificial Intelligence reshapes AI crypto coins in 2026 by pushing the category from “interesting narrative” to “real products people actually use.” That is the core takeaway behind the market trends we covered: winners are increasingly the projects that can prove utility (and revenue) instead of just promising a futuristic roadmap. In other words, the market is getting pickier, and that’s a good thing for anyone trying to build a sensible investment thesis.

If you found this review of the top AI coins to buy helpful, visit ChangeHero blog for more deep dives like this one. Subscribe to our social media — X, Facebook, & Telegram — for a steady stream of engaging content about AI and crypto, low cap AI crypto gems, and more.

Frequently Asked Questions About AI Crypto

What qualifies as an AI crypto coin?

An AI crypto coin powers, pays for, or secures an AI-related service on-chain (not just a token with “AI” in the ticker). The key part is utility: the token should have a clear job inside a product where AI work (training, inference, data coordination, agent execution) is happening.

Use cases usually fall into a few buckets: decentralized compute, model marketplaces, data markets, and autonomous agents that transact through smart contracts. On the other hand, a token that only posts AI-themed marketing and has no measurable AI workflow is just a “narrative coin”—and those tend to swing hardest when the hype cycle shifts.

Which AI crypto coins have the highest market capitalization in 2026?

Bittensor, NEAR Protocol, and Render sit among the most recognized top-tier AI-adjacent networks by market capitalization in 2026, largely because they map to real demand: incentives for model/agent networks (Bittensor), scalable on-chain infrastructure with AI positioning (NEAR Protocol), and GPU rendering/compute rails that overlap with AI workloads (Render). Here’s the important detail: “AI coins” is a category where classification moves fast, so leadership can shift quickly when a token’s utility (or narrative) changes.

What are the main risks specific to AI crypto coins?

AI crypto coins carry both crypto-native risks and AI-specific risks, and the combination is what makes them research-sensitive. Market capitalization can look impressive, but it does not remove the underlying fragility if liquidity dries up or the utility thesis breaks.

On the market side, the usual suspects are:

- Liquidity risk: smaller AI tokens can have thin order books, so entries and exits cost more than you expect (slippage is the silent fee).

- Narrative-driven volatility: AI is a headline magnet, which means prices can move on announcements, partnerships, and category hype—sometimes before real adoption shows up.

- Regulatory risks: projects touching data markets, model outputs, or “AI decisioning” can face extra scrutiny (and that can affect exchange listings, access, or growth).

The AI-specific risks is where things get different from, say, a DeFi governance token:

- Data integrity risk: if training data is low-quality, poisoned, or unverifiable, the whole network can reward the wrong behavior (garbage-in, tokens-out).

- Model bias and output quality: biased or inconsistent outputs can damage reputation, reduce demand, and create legal exposure for integrators.

- Verification difficulty: validating AI work is hard; if the system can’t reliably score results, bad actors can farm rewards.

- Centralization creep: AI infra often concentrates around whoever controls scarce compute or proprietary datasets, which can undermine the “decentralized” promise.

How can AI coins be evaluated beyond price performance?

AI coins should be evaluated by whether they deliver measurable utility and sustainable demand, not just whether the chart looks pretty this month. Price is a scoreboard, but it’s not the whole game (especially in AI, where hype can front-run reality).

A practical evaluation checklist usually starts with utility. Then look at adoption metrics that indicate traction beyond speculation. Network effects matter a lot in AI marketplaces. The important detail is that two-sided networks compound: more suppliers (compute/models/data) attract more buyers, which attracts more suppliers. When that flywheel spins, market capitalization is more likely to be defended by fundamentals rather than vibes.

How should AI coins be allocated in a diversified portfolio?

AI crypto coins should be allocated in tiers based on market capitalization, liquidity, and thesis strength, with strict limits that assume higher volatility and higher failure rates than large-cap crypto. In other words: you can love the theme and still size it like a venture bet.